Algorithm Rebels Club #4 Society — For the People Hidden Behind Convenience

We held the fourth and final session of Algorithm Rebels Club, a meetup group run through Netflixnga. Following Session 1’s ‘Experience,’ Session 2’s ‘Agency,’ and Session 3’s ‘Relationships,’ the theme this time was ‘Society — For the People Hidden Behind Convenience.’

Here’s what we covered:

- Book: A Robot’s Place (Jeon Chi-hyung, 2024)

- Book: The Future That Came Early (Jang Kang-myung, 2025)

- Book: AI Feeds on Humans (2025)

- AI Ethics Newsletter: Cheesecake, Not Slop (ai-ethics.kr, 2024.11.27)

- Article: Inside an AI start-up’s plan to scan and dispose of millions of books (Washington Post, 2026.01.27)

Sessions 1 through 3 started from ‘me’ and gradually widened the lens outward. This final session turned that lens toward ‘society.’ We laid bare the stories of people and material resources hidden behind our smooth screens, and together searched for where all four sessions converge.

1. The Air from Ten Years Ago, and Vibe Coding

The session opened with a memory from exactly ten years ago — March 2016. The day of the match between AlphaGo and Lee Sedol, the 9-dan Go master. I was a junior in computer science at the time, sitting in my very first philosophy class — Western Ethics — having just started a double major.

The professor mentioned, “Today is the day of the AlphaGo versus Lee Sedol match.” After the break, a student spoke up:

“Professor, AlphaGo won.”

I still can’t forget the air in that lecture hall. Fear. Unease. I could see the professor’s face — someone who rarely showed emotion — waver for just a moment.

The Future That Came Early, published last year, traces how the world of Go has changed since AlphaGo. The current world No. 1, Shin Jinseo (9-dan), has earned the nickname “Shin-telligence” — meaning he plays the moves closest to AI’s choices. But Shin himself puts it this way:

“In the past, someone at my level would have been considered nearly always right. But now, if AI says a move is suboptimal, viewers might think, ‘He’s ranked number one and he plays like that?’”

— Shin Jinseo, The Future That Came Early, p. 195

AI has gone from being a mirror of human ability to becoming the standard itself. The Future That Came Early frames it like this:

This is not about prize money declining. It’s a matter of pride. People derive pride from believing they are doing meaningful work well. AI will flatten both the meaning of work and human competence across far more domains than anyone expected.

— The Future That Came Early, p. 62

Another professional Go player was even more blunt:

“I’m going to change my answer — the era without AI was much better. I miss the romantic Go we used to play. When someone discovered a new move, it was infused with human effort and passion. We’d celebrate, study it, and grow through it. Technology has made our bodies more comfortable, but our souls seem to wither.”

— The Future That Came Early, p. 281

“Our bodies are more comfortable, but our souls seem to wither.” That feeling runs beneath everything we discussed today.

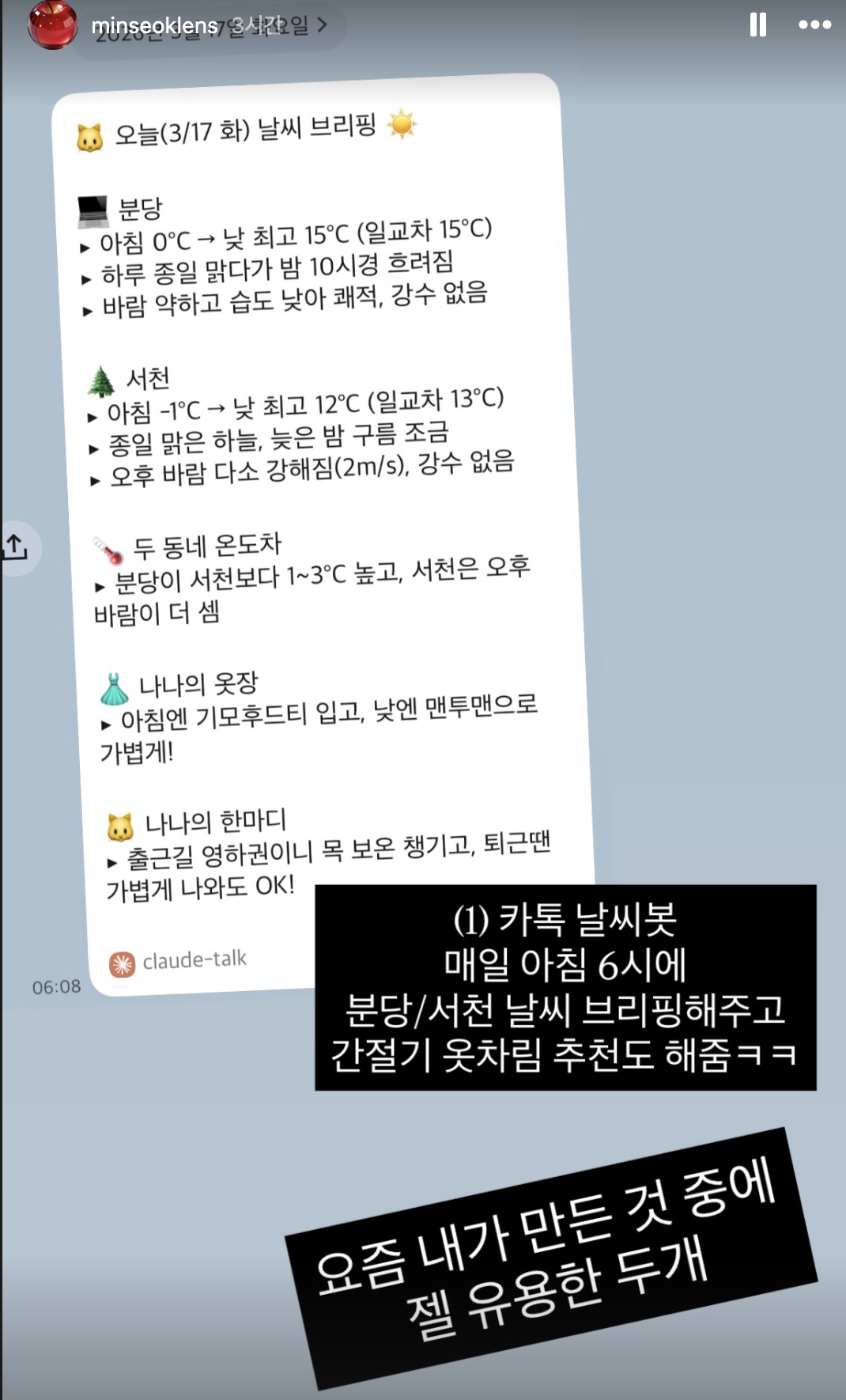

So will the world around us change the way the Go world has? I had a similar moment myself recently. My current hobby is ‘vibe coding’ — using AI to quickly build little tools I need. I’ve set up a daily weather alert via KakaoTalk, and built a Telegram bot that archives links.

But as I kept building bots, I needed more and more server resources. And it hit me — the electricity powering these servers, the enormous water consumption cooling data centers… Every little bot I build is gnawing away at someone’s resources. That sense of unease became the starting point for our final session.

2. Smooth Screens, Rough Reality

We hail a taxi with a single tap. A warm meal arrives at our door. Incredibly smooth user experiences. But behind that smoothness, someone is enduring extreme friction.

Kakao Navigation sometimes instructs drivers to make physically impossible left turns — across four lanes of traffic. But if the driver doesn’t comply, they risk rating attacks or account suspension. The person who designed the algorithm has never stood on that road. In optimizing the ‘user (passenger)’ experience, the ‘worker (driver)’ experience was simply left out of the equation.

Professor Jeon Chi-hyung, author of A Robot’s Place, calls this “the fallacy of premature automation.”

People working behind and beside robots to keep them running are treated as if they don’t exist. Then accidents happen. Workers collapse from exhaustion following routes and schedules dictated by algorithms, or are crushed by robots in factories where they work without supervisors or colleagues.

— A Robot’s Place, pp. 10-11

To rewrite the algorithm is to rewrite society itself.

— A Robot’s Place, p. 152

After laying this out, I asked the members: “Have you ever been using a service mindlessly and suddenly become aware of the person behind it?” The conversation took an unexpected turn. The ‘people hidden behind convenience’ turned out not to be some distant strangers — but themselves.

- (Haeju) “I haven’t been completely unaware. Deliveries keep getting faster, and at first it seemed great, but at some point I started wondering — do we really need it this fast? You can order from a bookstore and get same-day delivery, even on Saturdays. But couldn’t you just go to the bookstore?”

- (Haeju) “When you look at the Baemin delivery app, the characters walking toward you are really cute. But then you think — a real person is carrying this. They’ve made it adorable with characters, but behind all that, there’s real human labor.”

- (Hyeseung) “Before the Lunar New Year I ordered groceries, but I had to leave home for a week. I checked the map and the driver was already delivering in our neighborhood. I could have gone out to grab it, but that would mean interrupting their work. Thinking of them as a working person, not just a delivery function, made me reconsider.”

- (Hyeseung) “There’s a CJ delivery driver who comes to our neighborhood. They’re always loading up massive amounts of packages on the first floor, clearly rushed. They get paid per delivery, so speed means more money. My mom started looking after them — bringing snacks, chatting. They became close. But when a delivery is late, we get annoyed. That tension made me realize how easily I’d been treating this person as a delivery machine.”

The conversation shifted when the topic of ‘fast shipping’ came up and Haeju shared a funny experience:

- (Haeju) “Recently, if you order a book early in the morning, you get an option: ‘Want it fast, or would you rather get a 500-won coupon and receive it later?’ They pay you.”

- (Taehun) “That’s hilarious. They don’t pay you for speed — they pay you for patience. Wild.”

Then Yeongdong, who joined as a guest, brought up the reality of the architecture industry, and the mood shifted.

- (Yeongdong) “This is becoming a big issue in our field too — architectural rendering. A team of 3-4 specialists from a CG company used to spend a week to ten days producing one image. Now with AI, you get one every 10 minutes. What used to take 10 people ten days. It’s chilling.”

- (Yeongdong) “The executives say, ‘Just run AI yourselves, one rendering used to cost millions of won.’ Meanwhile, mid-career people like me are thinking, ‘What happens to those CG teams? And can I survive this? Could AI replace what I do too?’”

- (Yeongdong) “New hires come in already skilled with AI tools from school — their graduation shows are impressive. So management says, ‘Let the new hire do it, they’re good at it.’ But that person joined to do design, not to sit there generating images all day. And since what used to take a week now takes a day, the remaining six days get packed with extra work for everyone else.”

Hyeseung added what’s happening at US Big Tech:

- (Hyeseung) “Someone I know works near Meta. Before, if you worked weekends or late, HR would warn you — ‘Don’t violate our culture.’ Now, with layoffs looming, every employee works until 11 PM. On weekends too. Trying not to be on the list. HR isn’t saying anything this time.”

- (Hyeseung) “They’re cutting headcount because they need to build data centers. Investment money has to come from somewhere, so they reduce people. They’re cutting humans to build servers. Each person replaced by a rack.”

Work stories kept pouring out:

- (Minseok) “‘But isn’t the whole point of AI to spend less and get more output? Why does this cost so much?’ — that’s what leaders think. Fewer people, more output, faster. That’s what AI is supposed to do.”

- (Haeju) “Once they’ve tasted AI, it becomes ‘You can make this fast, right? So why isn’t it done yet?’”

-

(Haeju) “I work in AI transformation myself. I’d been thinking only positive thoughts — AI lowers technical barriers, so we can do more. But in for-profit companies, the time saved means people either get cut, get overworked, or end up staying late every night.”

- (Minseok) “When I think about it, the person hidden behind convenience is me. My leader is using me conveniently.”

Everyone realized they were the person hidden behind the screen. When I prepared this session, I thought we’d be finding some distant ‘others’ we’d been forgetting. Instead, with AI’s arrival, those others turned out to be us.

3. AI Is an Extraction Machine

Behind the screen, it’s not just people. There’s matter too.

AI Feeds on Humans defines AI’s true nature like this:

AI is closer to an extraction machine. Every process of classifying, discriminating, and predicting data reflects the interests and power structures of those who built it.

— AI Feeds on Humans, p. 21

Not a mirror — an extraction machine. So what does it extract? Three things.

It feeds on people — data labeling labor

For AI to become human-smart, someone has to manually classify data. These workers are in India, Kenya, the Philippines. To train Tesla’s self-driving system, humans must watch accident footage frame by frame and label it. One labeler was classifying footage of an old man being hit by a car on the street — and realized it was his own grandfather. But with daily quotas to meet and instant replacement for anyone who slips, he couldn’t leave.

Workers have almost no ability to adjust or control their tasks. All work is maximally simplified and segmented to maximize productivity and efficiency. In the process, they experience a peculiar blend of extreme boredom and anxiety. This is the reality at the front lines of the AI revolution.

— p. 53

It feeds on the earth — material infrastructure

AI feels like it floats somewhere in the cloud, but it’s actually a massive physical entity. A single hyperscale data center consumes as much electricity as 80,000 households. About 40% of operating costs go to cooling. GPT-1 in 2018 had 117 million parameters; GPT-4 in 2023 has 1.76 trillion — roughly 15,000 times more.

Google has invested in or owns 19 submarine cables. Meta, in partnership with Japan’s NEC, built the world’s highest-capacity submarine cable at 500 terabits per second. Just as telegraph lines were laid along railroads in the 19th century, today we’re laying fiber optics under the ocean. Technology’s material roots keep repeating the same pattern.

It feeds on knowledge and creativity

AI doesn’t just consume matter — it consumes human intellectual resources. The famous tech motto “Move fast and break things” has evolved:

“Move fast and steal things.”

— p. 146

“Steal” turned out to be literal, not metaphorical. In January this year, the Washington Post reported on Anthropic’s ‘Project Panama.’ They spent tens of millions of dollars buying millions of books, cutting their spines with hydraulic cutters, scanning pages at high speed, then sending them to recyclers. Internal documents read:

“Project Panama is our attempt to destructively scan every book in the world. We don’t want it to be known that we are doing this.”

Before that, it had been even more brazen. Anthropic’s co-founder personally downloaded books en masse from pirate library sites. Meta also reported illegal book downloads all the way up to CEO Zuckerberg for approval. An employee raised concerns about “downloading pirated content on company laptops,” but was ignored. They even rented Amazon cloud servers to avoid detection.

For the record — the AI I used to prepare this session’s materials is Claude, made by Anthropic. The irony of saying this at the meetup wasn’t lost on us.

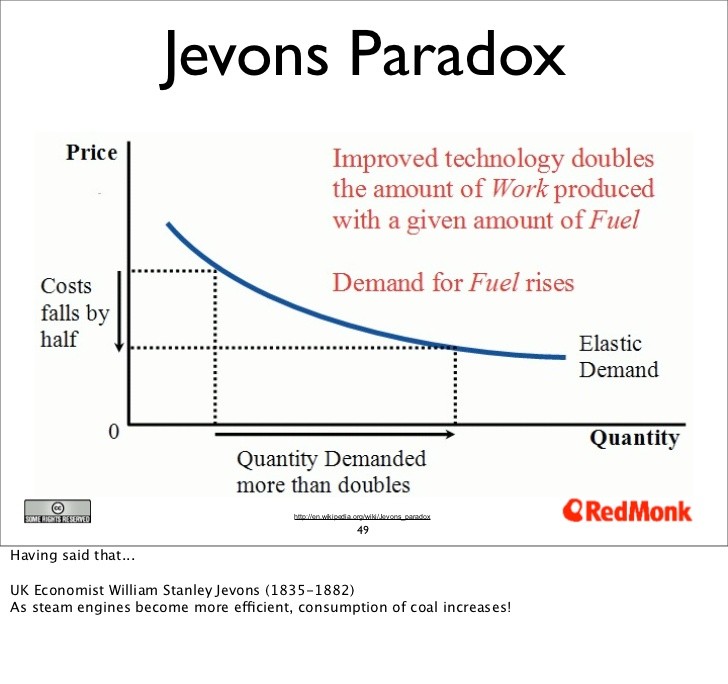

Jevons Paradox, and vibe coding

There was one concept we needed to address. Jevons Paradox. In the 19th century, when coal engines became more efficient, people expected coal consumption to drop. It increased instead. Greater efficiency created more uses. Better fuel economy means more driving; more efficient air conditioning means more AC units running. AI is the same. When people who couldn’t code can now build services through vibe coding, will server usage decrease or explode? Obviously explode. People like me are building ten bots at a time.

Knowing all this, can our behavior actually change? The members were honest:

- (Minseok) “I don’t think my habits change much. Giving up convenience and productivity has become really hard, and I wonder what difference one person opting out even makes. But when I ask AI about this, it always says, ‘As technology advances, we’ll be able to do more with less energy, so don’t worry.’ Never mentions Jevons Paradox. I’ve been asking for three years and the models keep giving the same answer.”

- (Hyeseung) “Even knowing all this, I don’t think it’s easy to change. At work, the constant refrain is ‘Try using AI for that’ or ‘Let’s do weekly AI Q&As.’ In a capitalist society, saying ‘I’d rather not use it for these reasons’ just isn’t easy. Unless the structure changes, individual action feels almost impossible.”

- (Mijeong) “I figured out what bothers me about using AI. It’s like impulse-buying clothes while thinking ‘I’m destroying the environment,’ but buying anyway. I use tokens the same way — with guilt. Having to keep using it while feeling guilty is just sad.”

- (Taehun) “I didn’t even know you should delete emails. For real. Emails produce carbon? I guess there’s nothing we can do? Elon Musk’s vision is everyone on universal basic income in a utopia, but would that actually be happier?”

- (Yeongdong) “When electricity was discovered and light bulbs came on, people imagined enjoying leisure in the evening. But what we actually do is work overtime. Ultimately, don’t we need social consensus? It took decades of social negotiation to get the 52-hour workweek. What worries me more is that unlike factory conveyor belt workers who were visible and could protest, the workers behind AI are completely hidden — and corporations are getting more sophisticated at keeping them that way.”

- (Haeju) “We don’t have to be only pessimistic. As knowledge and technology advance, we learn more about the environment and develop better ways to protect it. And the fact that we feel this discomfort, this dissonance, and know something is wrong — that keeping the conversation going could eventually lead to social consensus. There’s a nonprofit that used to have people manually monitor harmful content, causing severe trauma. With multimodal AI, machines can now do that screening instead. We should think more about how to use technology better.”

4. The Eternal Oscillation Between Code and Matter

Finally, we pulled out the thread connecting all four sessions.

In Session 1, we looked at ‘our senses.’ The story of how our tolerance for waiting dropped from 4 seconds to 2, how we’re losing the chance to know ourselves by refusing to endure boredom. So we proposed: “Let’s reintroduce friction into our smooth lives.” What we saw today was the other side of that friction. The friction that vanished from our side has been transferred — lethally — to someone behind the screen.

In Session 2, we looked at ‘our minds.’ What tech companies steal isn’t just time, but three kinds of light — the Spotlight of focus, the Starlight of who we want to become, the Daylight of knowing what we truly want. From today’s perspective, every second an algorithm holds our attention, GPUs spin somewhere, data centers consume power, cooling water is spent.

In Session 3, we looked at ‘our relationship with AI.’ Behind AI’s smooth empathy lies data from millions of books whose spines were sliced and scanned. Even emotional transactions have material costs — and we were finally able to see that gap.

Sessions 1-3 looked at the ‘code’ side — our senses, our attention, our relationships. Session 4 looked at the ‘matter’ side — servers, electricity, water, submarine cables, and the people working behind them all. Technology always oscillates between code (software, algorithms, experience) and matter (hardware, resources, labor). Only humans can observe this oscillation. What we did over four weeks was exactly that — seeing both sides of the elephant.

Jang Kang-myung writes in The Future That Came Early:

We must be wary of the nonsense that science and technology are value-neutral. Science and technology are a powerful force that reaches deep into not just the material world but the spiritual world.

— The Future That Came Early, p. 304

5. My Personal Tech User Manual

For our final activity, we took time to write down “what I will protect while riding the wave of technology.” We handed out paper and pens and asked everyone to complete three sentences:

1. When I use [ ], I will remember [ ].

2. When I build or plan [ ], I will check [ ].

3. I will absolutely never [ ].

After 15 minutes of writing, we sat in a circle and read them aloud one by one.

Minseok’s Tech User Manual

1. When I use Claude Code, I will remember the time and effort of the authors whose books made these smooth, brilliant answers possible. Those millions of authors — imagine how much blood and tears went into writing those books, in an era without AI. I want to meet more independent creators and produce more of my own work. I want to create things that aren’t just fodder for AI.

2. When building or planning AI transformation programs at work, I will check whether what I’m making is rendering someone’s work entirely obsolete, whether I’m dismissing their effort in the name of efficiency and productivity.

3. I will absolutely never say “Nothing changes no matter what we do.” Even when moments of helplessness keep coming, I need to keep feeling uncomfortable and keep speaking up. I should do a Season 2 of this club. Probably in May.

Haeju’s Tech User Manual

1. When I use Claude Code, considering Project Panama, data centers, and all of that — I will think about whether this is something I could have done perfectly well on my own, and whether I’m just outsourcing it to AI out of laziness.

2. When planning projects, I will seriously consider whether I’m actually making future generations’ lives better.

3. I will absolutely never hide behind “I’m not a tech person, I wouldn’t understand” as an excuse to look away.

Hyeseung’s Tech User Manual

1. When I use shopping apps, I will ask if I really need this. Everything moves so fast that I end up buying things on impulse — not out of need, but out of wanting it right now. And with AI tools, I’ve started asking questions before thinking deeply enough. I need to stop relying on it so completely and agreeing unconditionally.

2. I work in product development. When building services, I will check whether I’m stripping away user control in the name of conversion rates and frictionless sign-ups. Whether I’m treating users as ignorant and taking away too much of their agency.

3. I will absolutely never believe that only fast and convenient things are meaningful. User control means people can process information and act on their own will — and that requires a little inconvenience and time.

Taehun’s Tech User Manual

1. When I use YouTube, I will remember what I actually wanted. Session 1 stuck with me — it was really inspiring.

2. When building or planning ERP systems, I will check what alternative work exists for people whose jobs are being eliminated.

3. I will not just go along with the current. Even if I use it, I’ll use it knowingly.

Yeongdong’s Tech User Manual

1. When AI generates architectural images, I will remember the works and intentions of the master architects it’s drawing from. You can type a famous architect’s name and instantly generate their style — mimicking Frank Gehry has become trivially easy. But there were intentions behind those styles. Even if I borrow a style, I’ll at least look up the intent and understand “this is why they did it.”

2. When using generated text, I will check the context that got lost in summarization. For proposals, those grand-sounding closing paragraphs — I used to write them painstakingly, but now it’s so easy to just copy what AI produces. At least I should fact-check and verify the context behind those words.

6. Closing a Four-Week Voyage

We closed the session by reading the final lines of The Future That Came Early:

I believe there are things AI still cannot do that only humans can. Imagining something good. Believing we can change the future. And then actually changing it. We are the masters of our fate. We are the captains of our souls. For now.

— The Future That Came Early, p. 340

“For now.” Those two words are the point. The fact that we can write manifestos, ask uncomfortable questions, and look squarely at what technology really is — that’s because we are still the masters of our fate.

Thank you for willingly joining these strange, seemingly useless questions over four weeks, and for willingly feeling uncomfortable. The official Algorithm Rebels Club ends here, but each of your everyday rebellions is just beginning.