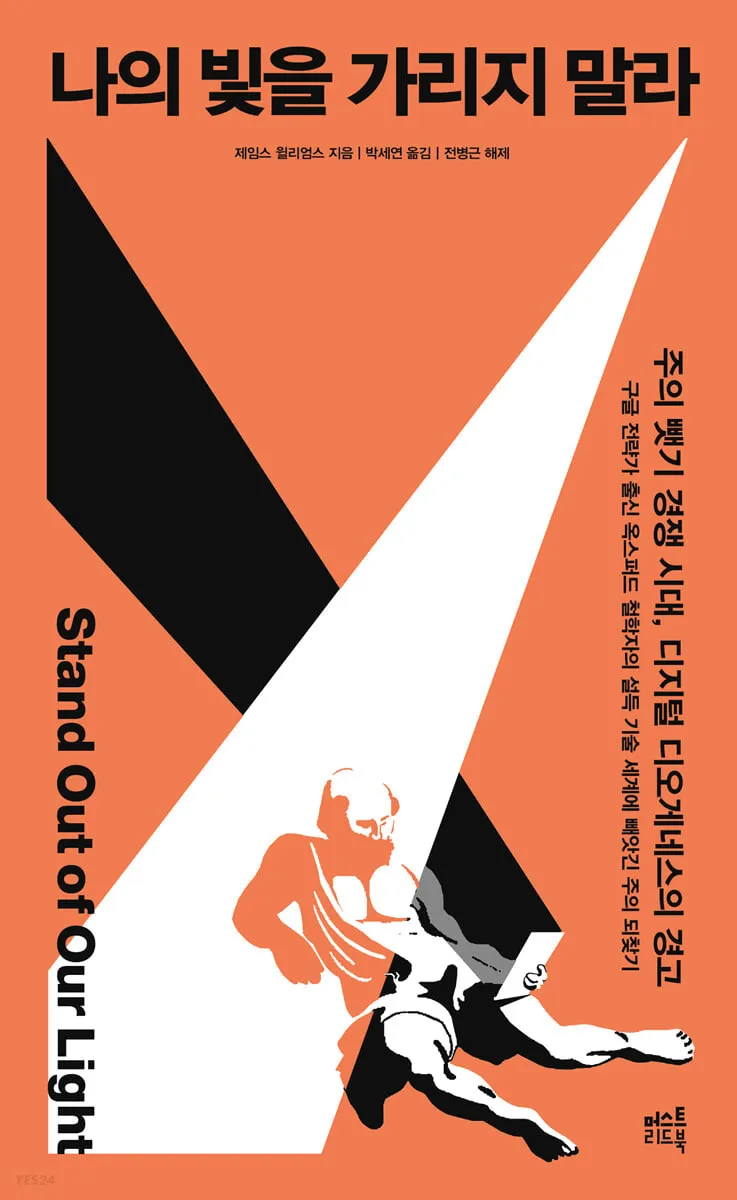

James Williams - Stand Out of Our Light

- Where I found it: While maintaining my interest in tech ethics in January, I stumbled upon it at a bookstore in Gangneung.

- How I got it: I bought it at the bookstore and read the physical copy.

- Reading period: February 13, 2023 ~ March 19, 2023

Keeping Control in the Digital World

It took me a full month from starting the first chapter to finish it. While the philosophical concepts in Part 2 were a bit challenging, the volume wasn't excessive. With a little focus, it wouldn't have taken a full month. Between February 13 and March 19, I binge-watched The Glory Part 2 on Netflix and watched all 10 episodes of Love War back-to-back. I watched KBO exhibition game clips on Twitter and chatted with friends on Instagram. I even spent two hours watching one-minute short-form videos on YouTube. As my attention got pulled into the digital world, reading this book gradually fell by the wayside. If I hadn't had a book club meeting scheduled, I don't know when I might have finished it.

I often recalled ⟨The Art of Doing Nothing⟩, a book that left a deep impression on me when I read it last fall. After reading it, I wished every product manager grappling with UX in the digital world would read it. Now, I'm convinced this book addresses a topic everyone in the tech industry should care about, not just UX. Even as I write this, my mind is filled with thoughts on how to spread the agenda this book advocates to more people.

What resonated most was the realization that those building digital systems often overlook the impact and significance of their work. As ubiquitous, portable, networked computers spread everywhere, these industrialized persuasion systems opened a door to bypass all other social systems and access our attention directly. This system now occupies over a third of our waking hours. Today, the power to shape the attention habits—and thus the lives—of billions of people rests in the hands of dozens of individuals. (p. 134) Those working in tech feel a sense of responsibility that is disproportionately low compared to the impact of the systems they create. Is this due to a well-oiled bureaucracy? Each individual within the company is simply striving to achieve their own goals. There's no time to worry about the social problems my diligence has caused while closing today's Jira tickets and writing today's worklog.

The author, who worked as a strategist at Google for ten years and was recognized for significant contributions in search advertising, understands this well. Ultimately, there is no one to blame. The 'flaw' lies not in individuals' internal decision-making structures, but in the new architecture of complex, multi-acting systems. Quality management guru William Edwards Deming stated: "A bad system will always overwhelm good people." (p. 156) The problem lies with the system. Humans create systems, then place themselves within them. Sometimes it's not the individual but the system that acts. We live by the system. But what if that system is flawed? What if it hinders our freedom? What if some people don't even realize their freedom is being compromised? What if we live in a naive world that blames individual willpower and attention, not the system? It just makes you sigh.

Even going to a digital detox camp, setting screen time limits, or using Do Not Disturb mode makes it hard to escape the system's grip. The tech industry as a whole needs to grapple with infraethics. First, products must default to allowing users to control their own attention. (p. 170) Hyejin, who works at Woowa Brothers, mentioned iPhone Screen Time during a book club and said this: "From the iPhone's perspective, they just supply the hardware, so I wonder if they could implement something like Screen Time. Could YouTube implement a feature like that—where I set a limit, say one hour, and it controls access? Could they put that on the main screen and manage it for us? It's also tied to revenue, of course." Could such settings be possible even for adult users? Could YouTube provide that? It wasn't an easy question to answer.

Instead of dismissing the distraction of the digital world as mere 'addiction,' it might be better to break down persuasive technologies into detailed language. We could map 'persuasive' technology terms according to ethically distinct criteria. In this chart, the Y-axis represents the degree of constraint imposed by the technology design on the user, while the X-axis represents the degree of goal alignment between the user and the technology design. (pp. 171-172) By categorizing language this way, we can consider where the systems we build belong and gain a moment of awareness. While we've primarily demanded transparency about how technology manages our information, it's now time to contemplate how it manages our attention (p. 177).

One approach is to establish a 'Designer's Oath,' akin to the Hippocratic Oath in medicine. While many questions remain about where, how, to whom, and what content such an oath should entail, an oath provides us with common ethical standards, serves as a reminder, offers an opportunity to make a formal commitment to specific values, and assigns responsibility for actions.(p. 178) The author's approach didn't strike me as an absurd claim.

Just days ago, news broke that Microsoft had dismissed its entire ethics and society team dealing with AI ethics. There was also a documentary addressing how harmful Instagram and YouTube short-form videos are to adolescents. (It's equally harmful to adults.) The competition for attention in the digital world is ongoing. Seeing rapidly advancing AI products makes it seem like it's already too late to start this discussion. It feels like the system has already taken control of my life, making it hard to hold the reins of my own existence, follow the starlight I desire, and live fully in the sunlight. But it's not too late. I want to believe that. Consider that it took humanity 1.4 million years to attach handles to stone axes. In contrast, the history of the web hasn't even reached 10,000 days. (p. 188) I want to gather with people interested in this agenda, even if only within our small tech industry, and take action. Isn't that the first step toward living without losing control of my own life?